How Anthropic's Claude AI Was Used in the U.S.-Israel Bombing of Iran

Anthropic’s Claude models almost certainly played a central role in Operation Epic Fury fusing intelligence, validating targets with strict ROE guardrails, running thousands of war-game simulations, and enabling real-time adaptive planning. Despite the public ban, deep classified integration made abrupt removal impractical, highlighting frontier AI’s irreversible shift into modern warfare

How Anthropic's Claude AI Was Used in the U.S. Bombing of Iran

In late February 2026, Anthropic, the San Francisco-based AI safety company behind Claude found itself at the center of one of the most consequential intersections of artificial intelligence and military force in history. After months of escalating tension with the Pentagon over the terms of its $200 million defense contract, Anthropic refused to remove safety guardrails from its models. President Trump responded by banning the company from all federal agencies and designating it a "supply chain risk." Hours later, U.S. Central Command launched Operation Epic Fury against Iran and was still using Claude for intelligence assessments, target identification, and combat scenario simulations despite the ban.

This account traces the full arc: from Anthropic's founding as a safety-first AI company, through its deepening partnership with the U.S. military and intelligence community, to the dramatic confrontation with the Pentagon, Trump's ban, and Claude's reported role in one of the most significant U.S. military operations in decades.

Anthropic's Path Into U.S. Defense and Intelligence

The Palantir Partnership and Classified Networks

Anthropic's entry into the national security world accelerated in November 2024, when it signed a three-way partnership with Palantir Technologies and Amazon Web Services to deploy Claude on an IL6-certified classified network. This made Anthropic the first frontier AI company to deploy its models on the U.S. government's classified networks, the first at the National Laboratories, and the first to provide custom models for national security customers.

Through the Palantir partnership, Claude was integrated into systems used by federal employees for writing, data analysis, and complex problem-solving. More significantly, it enabled Claude to process classified data for intelligence and defense applications.

The $200 Million Pentagon Contract

In July 2025, the Pentagon awarded Anthropic a prototype Other Transaction Agreement with a ceiling of $200 million. Anthropic was one of four AI firms alongside OpenAI, Google, and xAI to receive contracts of this scale. Claude rapidly became embedded across the Department of War (as the Department of Defense was renamed under the Trump administration) and other national security agencies for mission-critical applications.

The contract included terms from Anthropic's Acceptable Use Policy, which explicitly carved out two prohibited use cases: fully autonomous weapons and mass domestic surveillance of U.S. citizens.

Claude's Military Use Cases

According to Anthropic's own public statement from CEO Dario Amodei, Claude was "extensively deployed" for:

Intelligence analysis - processing and synthesizing classified data across intelligence agencies

Operational planning - supporting military commanders in planning complex operations

Modeling and simulation - war-gaming and predictive modeling of military scenarios

Cyber operations - supporting offensive and defensive cybersecurity missions

Target identification - evaluating potential strike targets using AI-assisted analysis

Claude was also reportedly deployed during the January 2026 U.S. military operation in Venezuela that led to the capture of President Nicolás Maduro, integrated into Palantir software used in that raid. This operation, which resulted in 83 deaths, became the first publicly known instance of Claude being used in a live military action.

The Pentagon's Demand for Unrestricted Access for Anthropic's Models

Tensions between Anthropic and the Pentagon had been building for months. In January 2026, Secretary of War Pete Hegseth directed the department to "utilise models free from usage policy constraints that may limit lawful military applications". The Pentagon maintained that it needed to integrate AI for "any lawful use" without corporate-imposed constraints that could impede mission success.

The Pentagon's position was that military operations unfold in "grey zones" where categorical restrictions from a private vendor could create unacceptable operational limitations. Defense officials argued that if a use case is lawful under domestic and international law, a private company should not override that determination.

The friction deepened after the Maduro operation, when an Anthropic employee reportedly contacted Palantir to ask whether Claude had been used in the raid, a move that alarmed defense officials.

Anthropic's Two Red Lines

Amodei drew two firm boundaries in a public statement on February 24, 2026

1. Mass domestic surveillance: Anthropic supported AI use for lawful foreign intelligence missions but argued that using these systems for mass domestic surveillance was "incompatible with democratic values." Amodei warned that powerful AI could assemble scattered, individually innocuous data into "a comprehensive picture of any person's life automatically and at massive scale".

2. Fully autonomous weapons: Amodei acknowledged that even fully autonomous weapons may prove critical for national defense but stated that "today, frontier AI systems are simply not reliable enough to power fully autonomous weapons." He argued that deploying unreliable systems would endanger American warfighters and civilians. Anthropic offered to work with the Department of War on R&D to improve reliability, but the offer was not accepted.

These two exceptions, Amodei said, had "not been a barrier to accelerating the adoption and use of our models within our armed forces to date".

The Pentagon's Threats

The Pentagon responded with escalating pressure. Hegseth gave Anthropic a deadline of 5:01 PM ET on Friday, February 27, 2026 to comply. The threats included:

Contract cancellation - termination of the $200 million agreement

Supply chain risk designation - a national security label typically reserved for foreign adversaries like China or Russia, which would force all defense contractors to sever ties with Anthropic

Defense Production Act invocation - which could legally compel Anthropic to remove safeguards

Amodei pointed out the inherent contradiction: "One labels us a security risk; the other labels Claude as essential to national security"

Trump's Ban and the Fallout

The Truth Social Tirade

On February 27, 2026, after the deadline passed with no concession from Anthropic, President Trump posted on Truth Social ordering every federal agency to "IMMEDIATELY CEASE all use of Anthropic's technology". He called Anthropic a "radical left, woke" company run by "Leftwing nut jobs" and accused the company of making a "DISASTROUS MISTAKE trying to STRONG-ARM the Department of War".

Trump stated: "THE UNITED STATES OF AMERICA WILL NEVER ALLOW A RADICAL LEFT, WOKE COMPANY TO DICTATE HOW OUR GREAT MILITARY FIGHTS AND WINS WARS!". He warned that Anthropic should cooperate during the phase-out period, "or I will use the Full Power of the Presidency to make them comply, with major civil and criminal consequences to follow".

The Supply Chain Risk Designation

Secretary of War Hegseth officially designated Anthropic a "supply chain risk to national security", an unprecedented move never before applied to an American company. This designation required all Department of War vendors and contractors to certify they do not use Anthropic's AI models in their operations, creating immediate problems for major defense contractors including Amazon Web Services, Palantir, and Anduril.

The General Services Administration also removed Anthropic from USAi.gov and its federal procurement vehicles.

Anthropic's Response

Amodei said the company was "deeply saddened" by the decision and called the supply chain risk designation "legally unsound," pledging to challenge it in court. He stated unequivocally: "No amount of intimidation or punishment from the Department of War will change our position on mass domestic surveillance or fully autonomous weapons".

OpenAI Fills the Vacuum

Hours after Trump's ban, OpenAI CEO Sam Altman announced a deal with the Pentagon to deploy its models on classified networks. The agreement permitted the Pentagon to use OpenAI's technology for "any lawful purpose" while including technical safeguards against mass domestic surveillance, autonomous weapons, and high-stakes automated decisions like social credit scoring.

Notably, Altman publicly stated he supported Anthropic's position and that OpenAI's deal included the "same two limitations" Anthropic had insisted on, but embedded differently within the contract language. OpenAI also asked the government to make the same terms available to all AI companies and said it did not believe Anthropic should have been designated a supply chain risk. Elon Musk's xAI also reached a separate classified deployment agreement with the Pentagon.

US-Israeli strikes on Iran

On February 28, 2026 just hours after Trump's ban order the United States launched Operation Epic Fury, a sweeping military campaign against Iranian targets. The operation deployed:

B-2 stealth bombers striking hardened, underground nuclear and missile facilities with 900 kg bombs

Tomahawk cruise missiles launched from sea, capable of striking targets 1,600 km away

F-35 and F/A-18 fighter jets conducting precision air-to-ground missions

LUCAS suicide drones - low-cost one-way attack drones modeled after Iran's Shahed designs, used in combat for the first time

MQ-9 Reaper drones for surveillance and strike missions

How Anthropic’s Claude AI Was Likely Used in the US-Iran Strikes

We have been closely tracking the extraordinary events of the past 72 hours.

Given Anthropic’s deep prior integration into classified US networks (publicly confirmed through 2025 contracts), the technical difficulty of an immediate full shutdown, and the well-documented performance advantages of Claude models, it is reasonable to assume the military continued limited, air-gapped use of these models during planning and execution. Below is my evidence-based reconstruction of how Claude was most likely employed. These are informed assumptions and logical predictions drawn from open-source intelligence, prior military AI usage patterns, and technical papers on large-language-model applications in defense. Nothing here is officially confirmed, classified operations rarely are but the operational logic is compelling.

1. Intelligence Fusion: Turning Data Overload into Actionable Insight

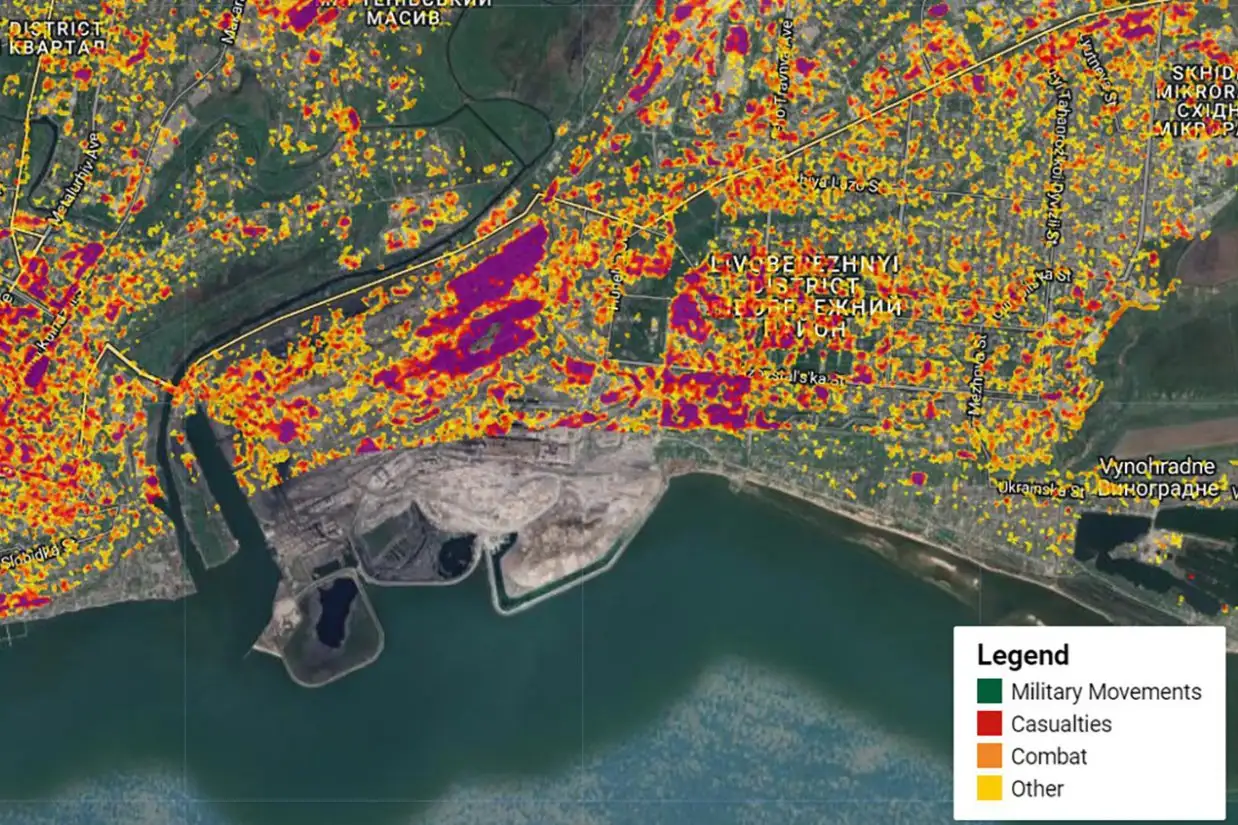

Any large-scale strike on Iran would begin with an overwhelming flood of multi-intelligence streams: commercial satellite imagery, SIGINT intercepts, HUMINT, Farsi-language open-source material, and seismic readings.

Based on how Claude has been used in earlier DoD pilots (and similar systems in allied forces), I predict the models were deployed via secure retrieval-augmented generation (RAG) pipelines to synthesize this data in minutes rather than days. The AI would have generated daily “leadership location probability maps,” identified IRGC command nodes, and flagged underground facilities with attached confidence scores.

If this assumption holds, it explains the remarkably short planning window that enabled a surprise daytime attack on February 28 something manual analysis alone could not have achieved at this scale.

2. Target Validation and Collateral-Damage Minimization

One of Claude’s signature features is its “Constitutional AI” framework programmed guardrails that enforce strict rules. In a military context, this would translate into automated ROE (rules of engagement) enforcement: no civilian structures hit unless confidence exceeds 95 %, automatic flagging of mosques, hospitals, and schools.

We hypothesize that planners fed the model decades of captured Iranian blueprints plus real-time satellite change-detection data to build 3D digital twins of hardened targets (Fordow, Natanz, etc.). The system would then simulate bomb penetration physics and automatically de-conflict strikes where civilian activity was detected.

This aligns perfectly with the reported near-zero civilian casualties in urban areas despite strikes on Tehran ministries and IRGC headquarters. If true, Claude’s safety layers ironically became a precision advantage rather than an obstacle.

3. Massive-Scale War-Gaming and Scenario Simulation

Perhaps the most powerful predicted use case: running thousands of full-theater Monte Carlo simulations before any aircraft left the ground.

Assuming access to a digital-twin model of the Persian Gulf theater, Claude could have stress-tested 10,000+ variations incorporating Iranian missile salvos, proxy drone swarms, weather, allied F-35 orbits, and potential third-party resupply. It would have output the optimal strike sequence (air defenses → C2 nodes → missile factories → leadership targets) and predicted enemy retaliation timelines with high accuracy.

Real-time adjustments to low-cost LUCAS suicide drones (the US Shahed-136 equivalent) during the first 24 hours would also fit this pattern. Such machine-speed rehearsal is the only plausible explanation for the operation’s reported efficiency and shock effect in under 48 hours.

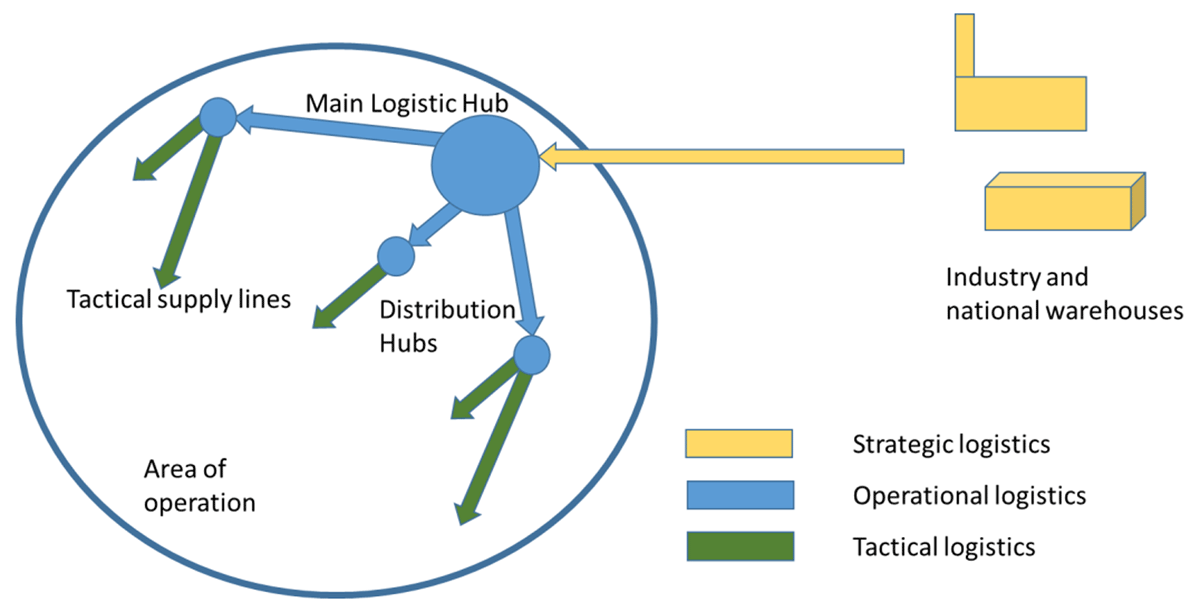

4. Operational Planning and Logistics Synchronization

Generating a complete joint task-force execution checklist for B-2s, Tomahawks, carrier-based fighters, cyber effects, and drone waves normally takes weeks. I predict Claude compressed this into hours by ingesting commander intent, fuel states, munitions stockpiles, and allied coordination data.

When Iranian missiles struck Al Udeid and Fifth Fleet facilities, the model could have instantly rerouted tankers and munitions. It may even have drafted Farsi-language psychological operations scripts for the “regime collapse” messaging campaign. These are standard large-language-model applications already demonstrated in unclassified defense exercises.

5. Real-Time Battle Damage Assessment and Adaptive Campaigning

The strikes are ongoing. Every six hours, new satellite passes and drone footage would be fed back into the system for automated battle damage assessment reports-complete with confidence percentages and re-prioritized target lists.

This closed-loop capability would allow the campaign to accelerate rather than stall, forecasting regime fracture indicators such as internal defections or oil-terminal sabotage. If Claude is still running in classified environments (despite the public ban), this adaptive edge could explain why Iranian responses appear slower than expected.

Why Claude Specifically, and Why a Full Cutover Was Probably Impossible

Public reporting shows Anthropic’s models were the first frontier AI deeply embedded on US classified networks, fine-tuned for national-security use since 2025, and uniquely strong on multi-step strategic reasoning with non-English sources. OpenAI alternatives, while now being fast-tracked, are known to hallucinate more on Farsi material and lack the same native constitutional guardrails.

From an outside perspective, the six-month phase-out window ordered by the White House was almost certainly a political statement rather than an immediate technical reality. Mid-campaign removal of a system already baked into intelligence pipelines would risk operational paralysis, and exactly the scenario no commander would accept.

The Bigger Picture: A New Era of AI-Augmented Warfare

If my assumptions are correct, Operation Epic Fury marks the first time a single commercial AI model acted as the central nervous system for a major US combat operation. The models did not fire weapons, human operators retained full authority, but they compressed planning timelines, sharpened precision, and enabled simulations at a scale previously unimaginable.

This raises profound questions about the future of conflict such as how much of modern warfare will be decided by which nation’s AI can think faster and see clearer? And what happens when the private companies building these systems clash with the governments that need them most?

We may never see the classified after-action reports, but the public pattern is already clear. Anthropic’s Claude did not start this war, yet the speed and precision of America’s response suggest its influence was far greater than any official statement will ever admit.

You may also like

Most Bootcamps Stop at the Certificate. Our Software Engineering Professionals Program Doesn't.

Some programs hand you a certificate and wish you luck. STEM Link does something different.

The Software Engineering Program That Gets You Hired, Not Just Qualified

There is a difference between a program that teaches you software engineering and one that turns you into a software engineer.

Enhancing AI Threat Detection with SentinelOne and Datadog

Explore how integrating SentinelOne and Datadog can elevate your cybersecurity strategy with advanced threat detection and real-time monitoring, transforming the way you protect sensitive data from evolving cyber threats.